Case

We use naming conventions in Synapse, but sometimes it's just a lot of work to check if everything is correct. Is there a way to automatically check those naming conventions?

|

| Automatically check Naming Conventions |

SolutionYou can use a PowerShell script to loop through the JSON files from Synapse that are stored in the repository. Prefixes for Linked Services, Datasets, Pipelines, Notebooks, Dataflows and scripts are easy to check since that is just a case of checking the filename of the JSON file. If you also want to check activities or use the Linked Service or Dataset type to check the naming conventions then you also need to check the contents of those JSON files.

There are a lot of different naming conventions, but as long as you are committed to use one, it will make your Synapse workspace more readable and your logs easier to understand. In one glance you will immediately see which part of Synapse is causing the error. Above all it looks more professional and it show you took the extra effort to make it better.

However, having a lot of different naming conventions also makes it is hard to create one script to rule them all. We created a script to check the prefixes of all differents parts of Synapse and you can configure them in a JSON file. You can use this script to either run it once a while to occasionally check your workspace or run it as a Validation step for a pull request in Azure DevOps. Then it acts like a gatekeep that doesn't allow bad named items. You can make it a required step then you first have to solve the issues or make it an optional check and then you will get the result, but you can choose to ignore it. For new projects you should make it required and for big existing projects you should probably first make it optional for a while and then change it to required.

The PowerShell script, the YAML file and the JSON config example are stored in a public

GitHub site. This allows us to easily improve the code for you and keep this blog post up-to-date. It also allows you to help out by doing suggestions or even to write some better code.

1) Folder structure repository

Just like the

Synapse Deployment scripts we store these validation files in the Synapse respository. We have CICD and a SYN folder in the root. SYN contains the JSON files from the Synapse workspace. The CICD folder had three sub folders: JSON (for the config), PowerShell (for the actual script) and YAML (for the pipeline that is required for validation).

Download the files from the

Github Repository and store these in your own Repository according the structure described above. If you use a different structure you have to change the paths in the YAML file.

|

| Repository structure |

2) Create pipeline

Now create a new pipeline with the existing YAML file called NamingValidation4Synapse.yml. Make sure the paths in the YAML are following the folder structure from step 1 or change it to your own structure.

If you are not sure about the folders then there is a

treeview step in the YAML that will show you the structure of the agent. Just continue with the next steps and after the first run check the result of the treeview step and change the paths in the YAML and run again. You can remove or comment-out the treeview step when everything works.

This example uses the Azure DevOps respository with the following steps:

- Go to pipelines and create a new pipeline

- Select the Azure Repos Git

- Select the Synapse repository

- Choose Existing Azure Pipelines YAML file

- Choose the right branch and select the YAML file under path

- Save it and rename it because the default name is equals to the repos name

|

| Create new pipeline with existing YAML file |

3) Branch validation

Now that we have the new YAML pipeline, we can use it as a Build Validation in the branch policies. The example shows how to add them in an Azure DevOps repository.

- In DevOps go to Repos in the left menu.

- Then click branches to get all branches.

- Now hover you mouse above the first branch and click on the 3 vertical dots.

- Click Branch policies

- Click on the + button in the Build Validation section.

- Select the new pipeline created in step 2 (optionally change the Display name)

- Choose the Policy requirement (Required or Optional)

- Click on the Save button

Repeat these steps for all branches where you need the extra check. Don't add them on feature branches because it will also prevent you doing manual changes in these branches.

|

| All branches |

|

| Required or Optional |

4) Testing

Now its time to perform a pull request. You will see that the validation will first be queued. So this extra validation will take a little extra time, especially when you have a busy agent. However you can just continue working and wait for the approval or even press Set auto-complete to automatically complete the Pull Request when all approvals and validations are validated. However don't auto-complete if you chose to make it an optional validation because then it will cancel it as soon as all other validations are ready.

As soon as the validation is ready it will show you the first couple if errors.

|

| Required Naming Convention Validation failed |

You can click on those couple of errors to see the total number of errors and the first ten errors.

|

| Get error count and first 10 errors |

And you can click on one of those errors to see the entire output including all errors and all correct items.

And at the bottom you will find a summary with the total number of errors and percentages per Synapse part.

|

| Summary example |

|

| Summary example |

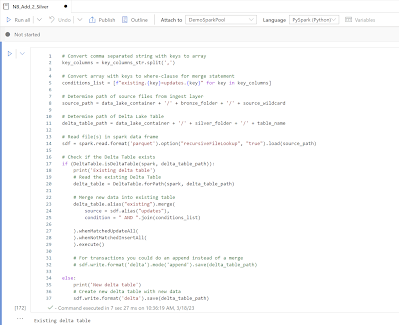

Or download your Synapse JSON files and run the PowerShell script locally with for example Visual Studio Code.

|

| Running Naming Validations in VCode |

Conclusion

In this post you learned how you could automate your naming conventions check. This helps/forces your team to consistantly use the naming conventions without you being some kind of nitpicking police officer. Everybody can just blame DevOps/themselves.

You can combine this validation with for example the

branch validation to prevent accidentily choosing the wrong branch in a Pull Request.

Please submit your suggestions under Issues (and then bug report of feature request) in the

GitHub site or drop it in the comments below.