A lot of IT companies have Azure subscriptions that are not primarily for customers, but are more playgrounds to create or try out stuff for clients. A lot of times you try out stuff like Azure Data Warehouse or Azure Analysis Services, services where you are not paying for usage but you are paying because the services are turned on.

When you are playing around with those services and forget to turn them off afterwards, it could get little costly especially when you have dozens of colleagues also trying out all the cool Azure stuff. How do you prevent those unnecessary high bills because of forgotten services?

|

| Pause everything |

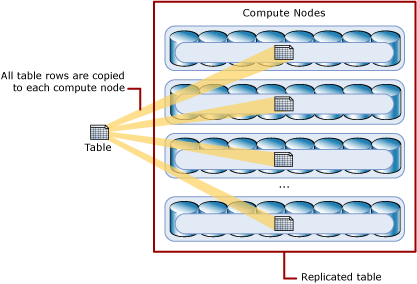

Solution

You should of course make some agreements about being careful with pricey services, but you can support that with a 'simple' technical solution: run a PowerShell script in Azure Automation Runbook that pauses all often used services each night. An exception list takes care of specific machines or servers that should not be paused. For this example we will pause the following Azure parts:

- Azure Virtual Machines (not classics)

- Azure SQL Data Warehouses

- Azure Analysis Services

1) Automation Account

First we need an Azure Automation Account to run the Runbook with PowerShell code. If you don't have one or want to create a new one, then search for Automation under Monitoring + Management and give it a suitable name like 'maintenance', then select your subscription, resource group and location. For this example I will choose West Europe since I'm from the Netherlands. Keep 'Create Azure Run As account' on Yes. We need it in the code. See step 3 for more details.

|

| Azure Automation Account |

2) Credentials

Next step is to create Credentials to run this runbook with. This works very similar to the Credentials in SQL Server Management Studio. Go to the Azure Automation Account and click on Credentials in the menu. Then click on Add New Credentials. You could just use your own Azure credentials, but the best options is to use a service account with a non-expiring password. Otherwise you need to change this regularly.

|

| Create new credentials |

3) Connections

This step is for your information only and to understand the code. Under Connections you will find a default connection named 'AzureRunAsConnection' that contains information about the Azure environment, like the tendant id and the subscription id. To prevent hardcoded connection details we will retrieve these fields in the PowerShell code.

|

| Azure Connections |

4) Variables

For the exception lists we will be using string variables to prevent hardcode machine and service names in de code. Go to Variables and add three new string variables: one for each type of machine or service we need to pause:

- ExceptionListVM

- ExceptionListDWH

- ExceptionListAAS

|

| Add string variables |

5) Modules

The Azure Analysis Services methods (cmdlets) are in a separate PowerShell module which is not included by default. If you do not add this module you will get errors telling you that the method is not recognized. See below for more details.

|

| The term 'Get-AzureRmAnalysisServicesServer' is not recognized as the name of a cmdlet, function, script file, or operable program. |

Go to the Modules page and check whether you see AzureRM.AnalysisServices in the list. If not then use the 'Browse gallery' button to add it, but first add AzureRM.Profile because the Analysis module will ask for it. Adding the modules could take a few minutes!

|

| Add modules |

6) Runbooks

Now it is time to add a new Azure Runbook for the PowerShell code. Click on Runbooks and then add a new runbook (There are also several example runbooks of which AzureAutomationTutorialScript could be useful as an example). Give your new Runbook a suitable name like 'PauseEverything' and choose PowerShell as type.

|

| Add Azure Runbook |

7) Edit Script

After clicking Create in the previous step the editor will be opened. When editing an existing Runbook you need to click on the Edit button to edit the code. You can copy and paste the code below to your editor. Study the green comments to understand the code. Also make sure to compare the variable names in the code to the once created in step 4 and change them if necessary.

|

| Edit the PowerShell code |

# PowerShell code

# Connect to a connection to get TenantId and SubscriptionId

$Connection = Get-AutomationConnection -Name "AzureRunAsConnection"

$TenantId = $Connection.TenantId

$SubscriptionId = $Connection.SubscriptionId

# Get the service principal credentials connected to the automation account.

$null = $SPCredential = Get-AutomationPSCredential -Name "Administrator"

# Login to Azure ($null is to prevent output, since Out-Null doesn't work in Azure)

Write-Output "Login to Azure using automation account 'Administrator'."

$null = Login-AzureRmAccount -TenantId $TenantId -SubscriptionId $SubscriptionId -Credential $SPCredential

# Select the correct subscription

Write-Output "Selecting subscription '$($SubscriptionId)'."

$null = Select-AzureRmSubscription -SubscriptionID $SubscriptionId

# Get variable values and split them into arrays

$ExceptionListAAS = (Get-AutomationVariable -Name 'ExceptionListAAS') -split ";"

$ExceptionListVM = (Get-AutomationVariable -Name 'ExceptionListVM') -split ";"

$ExceptionListDWH = (Get-AutomationVariable -Name 'ExceptionListDWH') -split ";"

################################

# Pause AnalysisServicesServers

################################

Write-Output "Checking Analysis Services Servers"

# Get list of all AnalysisServicesServers that are turned on (ProvisioningState = Succeeded)

$AnalysisServicesServers = Get-AzureRmAnalysisServicesServer |

Where-Object {$_.ProvisioningState -eq "Succeeded" -and $ExceptionListAAS -notcontains $_.Name}

# Loop through all AnalysisServicesServers to pause them

foreach ($AnalysisServicesServer in $AnalysisServicesServers)

{

Write-Output "- Pausing Analysis Services Server $($AnalysisServicesServer.Name)"

$null = Suspend-AzureRmAnalysisServicesServer -Name $AnalysisServicesServer.Name

}

################################

# Pause Virtual Machines

################################

Write-Output "Checking Virtual Machines"

# Get list of all Azure Virtual Machines that are not deallocated (PowerState <> VM deallocated)

$VirtualMachines = Get-AzureRmVM -Status |

Where-Object {$_.PowerState -ne "VM deallocated" -and $ExceptionListVM -notcontains $_.Name}

# Loop through all Virtual Machines to pause them

foreach ($VirtualMachine in $VirtualMachines)

{

Write-Output "- Deallocating Virtual Machine $($VirtualMachine.Name) "

$null = Stop-AzureRmVM -ResourceGroupName $VirtualMachine.ResourceGroupName -Name $VirtualMachine.Name -Force

}

# Note: Classic Virtual machines are excluded with this script (use Get-AzureVM and Stop-AzureVM)

################################

# Pause SQL Data Warehouses

################################

Write-Output "Checking SQL Data Warehouses"

# Get list of all Azure SQL Servers

$SqlServers = Get-AzureRmSqlServer

# Loop through all SQL Servers to check if they host a DWH

foreach ($SqlServer in $SqlServers)

{

# Get list of all SQL Data Warehouses (Edition=DataWarehouse) that are turned on (Status = Online)

$SqlDatabases = Get-AzureRmSqlDatabase -ServerName $SqlServer.ServerName -ResourceGroupName $SqlServer.ResourceGroupName |

Where-Object {$_.Edition -eq 'DataWarehouse' -and $_.Status -eq 'Online' -and $ExceptionListDWH -notcontains $_.DatabaseName}

# Loop through all SQL Data Warehouses to pause them

foreach ($SqlDatabase in $SqlDatabases)

{

Write-Output "- Pausing SQL Data Warehouse $($SqlDatabase.DatabaseName)"

$null = Suspend-AzureRmSqlDatabase -DatabaseName $SqlDatabase.DatabaseName -ServerName $SqlServer.ServerName -ResourceGroupName $SqlDatabase.ResourceGroupName

}

}

Write-Output "Done"

Note 1: This is a very basic script. No error handling has been added. Check the AzureAutomationTutorialScript for an example. Finetune it for you own needs.

Note 2: There are often two versions of an method like Get-AzureRmSqlDatabase and Get-AzureSqlDatabase. Always use the one with "Rm" in it (Resource Managed), because that one is for the new Azure portal. Without Rm is for the old/classic Azure portal.

Note 3: Because Azure Automation doesn't support Out-Null I used an other trick with the $null =. However the Write-Outputs are for testing purposes only. Nobody sees them when they are scheduled.

Note 4: The code for Data Warehouses first loops through the SQL Servers and then through all databases on that server filtering on edition 'DataWarehouse'.

7) Testing

You can use the Test Pane menu option in the editor to test your PowerShell scripts. When clicking on Run it will first Queue the script before Starting it. If nothing needs to be paused the script runs in about a minute, but pausing or deallocating items takes several minutes.

|

| Testing the script in the Test Pane |

8) Publish

When your script is ready, it is time to publish it. Above the editor click on the Publish button. Confirm overriding any previously published versions.

|

| Publish the Runbook |

9) Schedule

And now that we have a working and published Azure Runbook, we need to schedule it. Click on Schedule to create a new schedule for your runbook. For this pause everything script I created a schedule that runs every day on 2:00AM (02:00). This gives late working colleagues more than enough time to play with all the Azure stuff before there service will be paused.

|

| Add Schedule |

Summary

In this post you saw how you can pause all expensive services in an playground environment. If a colleague don't wants to pause his/her service then we can use the variables to skip the particular service. As mentioned before: this is not a complete list. Feel free to suggest more services, that can be paused, in the comments.

This script requires someone to maintain the exception list variables. I have created an alternative script that uses tags instead of centralized Runbook variables. This allows you to add a certain tag to your service to avoid the pause which gives colleagues more control.

Update: code for classic Virtual Machines (for separate Runbook)

# PowerShell code

# Connect to a connection to get TenantId and SubscriptionId

$Connection = Get-AutomationConnection -Name "AzureRunAsConnection"

$TenantId = $Connection.TenantId

$SubscriptionId = $Connection.SubscriptionId

# Get the service principal credentials connected to the automation account.

$null = $SPCredential = Get-AutomationPSCredential -Name "Administrator"

# Login to Azure ($null is to prevent output, since Out-Null doesn't work in Azure)

Write-Output "Login to Azure using automation account 'Administrator'."

$null = Add-AzureAccount -Credential $SPCredential -TenantId $TenantId

# Select the correct subscription (method without Rm)

Write-Output "Selecting subscription '$($SubscriptionId)'."

$null = Select-AzureSubscription -SubscriptionID $SubscriptionId

# Get variable values and split them into arrays

$ExceptionListVM = (Get-AutomationVariable -Name 'ExceptionListVM') -split ";"

#################################

# Pause Classic Virtual Machines

#################################

Write-Output "Checking Classic Virtual Machines"

# Get list of all Azure Virtual Machines that are not deallocated (Status <> StoppedDeallocated)

$VirtualMachines = Get-AzureVM |

Where-Object {$_.Status -ne "StoppedDeallocated" -and $ExceptionListVM -notcontains $_.Name}

# Loop through all Virtual Machines to pause them

foreach ($VirtualMachine in $VirtualMachines)

{

Write-Output "- Deallocating Classic Virtual Machine $($VirtualMachine.Name) ($($VirtualMachine.ServiceName))"

$null = Stop-AzureVM -ServiceName $VirtualMachine.ServiceName -Name $VirtualMachine.Name -Force

}

Write-Output "Done"

Note 1: Login method name is slightly differentNote 2: Other methods use the version without Rm in the name: Stop-AzureRmVM => Stop-AzureVM