I want to upscale and downscale my Azure Analysis Services (AAS) from within Azure Data Factory, but I don't want write any code or use other Azure services like Azure Automation or Azure Logic Apps to do this. Is there an Azure Data Factory-only solution where we only use the standard pipeline activities from ADF?

|

| Save some money on your Azure Bill by pausing AAS |

Solution

Yes you can use the Web Activity to call the Rest API of Azure Analysis Services (AAS), but that requires you to give ADF permissions in AAS via its Managed Service Identity (MSI). If you already used our Process Model example, then this is slightly different (and easier).

1) Add ADF as contributer to AAS

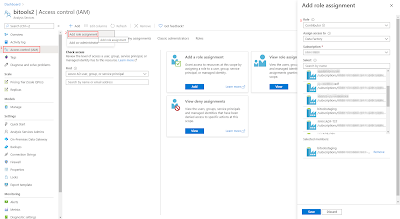

Different than for processing one of the AAS models we don't need SSMS to add ADF as an Server Administrator. Instead we will use Access control (IAM) on the Azure portal to make our ADF a contributor for the AAS that we want to pause or resume.

- Go to your AAS the Azure portal

- In the left menu click on Access control (IAM)

- Click on + Add and choose Add role assignment

- In the new Add role assignment pane select Contributor as Role

- In the Assign access to dropdown select Data Factory

- Select the right Subscription

- Now Select your Data Factory and click on the Save button

|

| Add ADF as Contributor to AAS |

2) Add Web Activity

In your ADF pipeline you need to add a Web Activity to call the Rest API of Analysis Services. First step is to determine the Rest API URL. Replace in the string below, the <xxx> values with the subscription id, resource group and servername of your Analysis Services. The Rest API method we will be using is 'update':

https://management.azure.com/subscriptions/<xxx>/resourceGroups/<xxx>/providers/Microsoft.AnalysisServices/servers/<xxx>/?api-version=2017-08-01

Example:

https://management.azure.com/subscriptions/a74a173e-4d8a-48d9-9ab7-a0b85abb98fb/resourceGroups/bitools/providers/Microsoft.AnalysisServices/servers/bitools2/?api-version=2017-08-01

Second step is to create a JSON message for the Rest API. The tier in this message is either: Developer, Basic or Standard. Within those tiers you have the (instance) name like B1, B2, S1, S2 until S9. Note that you can upscale from Developer to Basic to Standard, but you cannot downscale from Standard to Basic to Developer. Since Developer has only one instance you will probably never use that within this JSON message.

{

"sku":{

"capacity":1,

"name":"S1",

"tier":"Standard"

}

}

or

{

"sku":{

"capacity":1,

"name":"B2",

"tier":"Basic"

}

}

- Add the Web activity to your pipeline

- Give it a descriptive name like Upscale AAS (or Downscale AAS)

- Go to the Settings tab

- Use the Rest API URL from above in the URL property

- Choose PATCH as Method

- Add the JSON message from above in the Body property

- Under advanced choose MSI as Authentication method

- Add 'https://management.azure.com/ in the Resource property (different than process example)

|

| Web Activity calling the AAS Rest API |

|

| Then Debug the Pipeline to check the scaling action |

3) Retrieve info

By changing the method type from PATCH to GET (body property will disappear), you can retrieve information about the AAS like pricing tier (or status). You could for example use that to first check the current pricing tier before changing it.

|

| Retrieve service info via GET |

Summary

In this post you learned how change the pricing tier from your Analysis Services to save some money on your Azure bill. The big advantage of this method is that you don't need other Azure services which makes maintenance a little easier. In a previous post we already showed you how to pause or resume your AAS with the Rest API.