Case

When deploying Azure Data Factory (ADF) via Azure DevOps we often forget to hit the publish button in the Data Factory UI and then the changes won't be deployed. Is there a easier solution?

|

| No Publish required anymore! |

Solution

This year Microsoft released the

ARM template export option that doesn't require manually hitting the publish button in the Data Factory UI. This makes the Continuous Integration experience much better. In this blog post we will create a complete

YAML pipeline to build and deploy ADF to your DTAP environments.

Prerequisites

- A Service Principle in the Azure Active Directory

- At least two Azure Data Factories (Dev and Prd)

- An Azure DevOps project

- Basic or Visual Studio Enterprise/Pro license

Stakeholder is not enough but the first 5 users are for free

Note: At lease three ADF environments is recommended. Otherwise you will always do the first deployment in production without testing the deployment itself.

1) Connect ADF Dev to a repository

First we need to connect the Development Data Factory to a Git repository. Other ADF's (test, acceptance and production) will not be connected to the Git repository. You can do this when creating your ADF or skip that step and change the settings afterwards.

- Go to your ADF and open ADF studio. On the left side click on Manage (toolbox icon)

- Under Source Control click on Git configuration and then on the Configure button

- Select Azure DevOps Git as Repository type

- Select your Azure Active Directory and then click on the Continue button

- Now select your Azure DevOps organization name, the Project name and the Repository name. Note: you can create a new/separate repository for your Azure Data Factory within your Azure DevOps project settings.

- Next step is to select the Collaboration Branch. In most cases this is the Main branch, but some organizations use different names (and different branch strategies).

- Leave the Publish branch on adf_publish (we will not use it, no more publish buttons)

- The Root folder is also something to consider changing. Since we don't want everything of ADF in the root of the repository we will use /ADF/ as root folder. Now all pipelines, datesets, dataflows, etc. will be stored in a sub folder called ADF.

- Uncheck the import radio button since this is a new configuration/start situation and then hit the Apply button

- Go to the Repository in you Azure DevOps project and check the changes. You should see some new folders and files.

|

| Configure GIT in ADF |

|

| ADF in the repository |

2) ARM parameter configuration in ADF

The next step is not required, but will give an annoying

error(/warning) during the build of your project in DevOps that it cannot find the file arm-template-parameters-definition.json and will use a default instead. See

this blog post for more details.

- In the same menu as before, click on ARM template under Source Control

- Then click on Edit parameter configuration to open the editor

- Now you will see the filename mentioned above and you will see the content of the json file.

- For now we will use the default content and click on the OK button. In a later post we will show you what you can accomplish by changing this file

- Go to the Repository in you Azure DevOps project and check the changes. You should now see the new json file.

|

| arm-template-parameters-definition.json |

3) Create repository folder CICD

The repository now contains a folder called ADF containing all components of ADF. The next step is to create an other folder in the root called CICD (continuous integration continuous deployment). This folder will be used to store files used during the deployment of ADF, but that are not part of ADF itself like the YAML and PowerShell files. You can of course change that name, but keep in mind that changing it effects a lot of the following steps.

Also note that you cannot create empty folders in a Git repository and that you always have to create a file when creating a folder. For this example call the file readme.md and use it to add documentation about the ADF CICD process (or to add a link to this page).

Within the CICD folder create a YAML and PowerShell folder. These will be filled later on in the process. For now add an other readme file in those folders which you can delete after you added other files later on in the process.

|

| Required folder structure in devops |

4) Add package.json for Npm

Next step is to add a file to the repository that contains information about an ADF module for node.js which we will use later on. Within the CICD folder create a subfolder called packages and call the new file package.json

Copy and paste the following JSON message to the new file and commit the changes to the repository.

{

"scripts":{

"build":"node node_modules/@microsoft/azure-data-factory-utilities/lib/index"

},

"dependencies":{

"@microsoft/azure-data-factory-utilities":"^0.1.5"

}

}

|

| package.json |

5) Add publish_config.json

The next step is (again) not required, but will otherwise give you an other annoying

error(/warning) during the build of your project in DevOps that it cannot find the file publish_config.json. See

this post for more details. Summary:

- Add a file called publish_config.json to the repository in the root of the ADF folder

- The content of the file should be:

{"publishBranch": "factory/adf_publish"}

|

| Add the publishing branch in the following format |

6) Add Service Connection

Now it's time to create a service connection in Azure DevOps with the Service Principle (SP) mentioned in the Prerequisites. You will need read access to the AAD to see this SP in the AAD. This SP account will be used to deploy ADF. Make sure you have all the details like Service Principal Id, Service Principal Key and Tenant Id

- Within your DevOps project go to the Project Settings (bottom-left)

- Go to Service connections under Pipelines

- Click on New service connection, Choose Azure Resource Manager as type and click next

- Choose Service principal (manual)

- Now fill in the Service principal details and enter a name (and description) for the service connection. Make sure to Verify the SP details and click save.

|

| Add Service Connection with SP |

7) Add Variable Groups

To supply all the parameters(/variables) for the YAML script we need one general Variable Group (called ParamsGen) and one for each ADF environment (called ParamsDev, ParamsAcc, ParamsPrd).

- In your Azure DevOps project go to Library in the Pipelines section

- Click on + Variable Group to create a new Variable Group called ParamsGen

- Add the following Variables:

- PackageLocation (path in Repos of package location: /CICD/packages/)

- ArmTemplatefolder (subfolder of generated ARM template: ArmTemplateOutput)

- Click on + Variable Group to create a new Variable Group called ParamsDev

- Add the following Variables:

- DataFactoryName (name of your Dev ADF connected to the Repos)

- DataFactoryResourceGroupName (Location of your Dev ADF)

- DataFactorySubscriptionId (Azure subcription of your Dev ADF)

- Repeat the previous step for all the environments where you want to deploy ADF (Tst/Acc/Prd)

|

| Variable Groups |

8) Add YAML Build pipeline

Now it's time to create an actual pipeline. Either create a new pipeline under the Pipelines section in DevOps, enter all the required YAML code and save it in the CICD\Yaml folder of the repository. Or create a Yaml file in the CICD\Yaml repository folder then create a new pipeline bases on an existing file from the repository.

The first part of the pipeline where we generate an ARM template, which we will later on deploy to the other Data Factories, consists of 3 parts:

A. VariablesThe YAML file starts with including the variable groups ParamsGen and ParamsDev. The other groups will be included in the deployment part.

###################################

# General Variables

###################################

variables:

- group: ParamsGen

- group: ParamsDev

B. Trigger

Also in the start of the yaml is the trigger. The

trigger configuration determines when the pipeline will run. In this case it will run when something happens in the main branch but it will ignore changes in the CICD folder. It's also possible to include the ADF folder instead. Then it will only be triggered by changes in that folder.

###################################

# When to create a pipeline run

###################################

trigger:

branches:

include: # Collaboration branch

- main

paths:

exclude:

- CICD/*

C. Stages

The last part of the start is configuring the first

stage and its

job. Besides naming those parts you also see the workspace clean all step which will empty/clean the workspace folder. This is especially useful when you are hosting your own agent. The

Pool determines which agent will run the YAML pipeline. In this example it will use a Microsoft hosted windows based agent or (see comment) a unix based agent.

stages:

###################################

# Create Artifact of ADF files

###################################

- stage: CreateADFArtifact

displayName: Create ADF Artifact

jobs:

- job: CreateArtifactJob

workspace:

clean: all

pool:

vmImage: 'windows-latest' #'ubuntu-latest'

steps:

And now it's time for the first Stage called Create ADF Artifact, which consists of 6 steps and an optional debug step:

- Retrieve files from repository

- Install Node.js on agent

- Install npm package on agent

- Validate ADF

- Generate ARM template

- Publish ARM template as artifact

- Debugging, show treeview or file content

The first step is retrieve all files from the repository to the agent that is running the pipeline. This is done via the

Checkout command which has a couple of options like checkout type and clean.

###################################

# 1 Retrieve Repository

###################################

- checkout: self

displayName: '1 Retrieve Repository'

clean: true

II: Installs Node.js on agentThis example required node.js so the next step is to install it on our agent via

NodeTool@0 Update: make sure to take an update version. You can now use 14.0 or even 16.0

###################################

# 2 Installs Node.js on agent

###################################

- task: NodeTool@0

displayName: '2 Install Node.js'

inputs:

versionSpec: '10.x'

checkLatest: true

III: Installs npm package for node.js on agentThe

npm@1 task with the command 'Install' will install the ADF package mentioned in step 4. The working directory is a concatenation of predefined variable Build.Repository.LocalPath and user variable PackageLocation mentioned in step 7: D:\A\1\s + \CICD\packages\

###################################

# 3 Install npm package of ADF

###################################

- task: Npm@1

displayName: '3 Install npm package'

inputs:

command: 'install'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

verbose: true

IV: Validate ADFThis step is the equivalent of the Validate All button in the gui.The

npm@1 task with the command 'validate' will validate the content of the ADF folder against the Data Factory in Development. The URL for that ADF is concatenated with the variable values from the development variable group which is included together with the general version at the start of the YAML file. You could skip this step because the next step (export)

seems to first validate the files before it exports an ARM template. However it only takes a few seconds so it doesn't really hurts us.

###################################

# 4 Validate ADF in repository

###################################

- task: Npm@1

displayName: '4 Validate ADF'

inputs:

command: 'custom'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

customCommand: 'run build validate $(Build.Repository.LocalPath)/ADF /subscriptions/$(DataFactorySubscriptionId)/resourceGroups/$(DataFactoryResourceGroupName)/providers/Microsoft.DataFactory/factories/$(DataFactoryName)'

V: Generate ARM template

This step is the equivalent of the publish button in the gui and exports an ARM template for ADF. It is nearly the same as the previous step. Validate has been replaced by export and and export location has been added at the end.

###################################

# 5 Generate ARM template from repos

###################################

- task: Npm@1

displayName: '5 Generate ARM template'

inputs:

command: 'custom'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

customCommand: 'run build export $(Build.Repository.LocalPath)/ADF /subscriptions/$(DataFactorySubscriptionId)/resourceGroups/$(DataFactoryResourceGroupName)/providers/Microsoft.DataFactory/factories/$(DataFactoryName) "$(ArmTemplateFolder)"'

VI: Publish ARM template as artifact

This step generates an

artifact for the exported ARM template. This ensures the ARM template can be used in a next stage.

###################################

# 6 Publish ARM template as artifact

###################################

- task: PublishPipelineArtifact@1

displayName: '6 Publish ARM template as artifact'

inputs:

targetPath: '$(Build.Repository.LocalPath)$(PackageLocation)$(ArmTemplateFolder)' # The arm template export folder

artifact: 'ArmTemplatesArtifact'

publishLocation: 'pipeline'

VII: Debugging, show treeview or file content

A simple PowerShell step will show you a

treeview of files and folders. This extra step is extremely useful because it shows you the current state of the agent. You could also add this step right after the Checkout step to see what the result is of that step. It really helps you to understand what happens after each step.

###################################

# 7 Show treeview of agent

###################################

- powershell: |

tree "$(Pipeline.Workspace)" /F

Write-host "--------------------ARMTemplateForFactory--------------------"

Get-Content -Path $(Build.Repository.LocalPath)$(PackageLocation)$(ArmTemplateFolder)/ARMTemplateForFactory.json

Write-host "-------------------------------------------------------------"

displayName: '7 Treeview Workspace and ArmTemplateOutput content '

|

| The result of running the build part of the pipeline |

9) Add release part to YAML pipeline

In the next blog post we will create a separate YAML file which will do the actual deployment including some pre and post deployment steps. That YAML file needs to know to which Data Factory we need to deploy the ARM template. If you include the specific variable group just before calling the second YAML then that YAML file can use those variables, but you can also provide those variable values via parameters to the second YAML file. Providing the values via parameters is probably a bit nicer but also a bit of extra work, especially when a lot of parameters.

###################################

# Deploy Test environment

###################################

- stage: DeployTest

displayName: Deploy Test

variables:

- group: ParamsTst

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: tst

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)

###################################

# Deploy Acceptance environment

###################################

- stage: DeployAcceptance

displayName: Deploy Acceptance

variables:

- group: ParamsAcc

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: acc

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)

###################################

# Deploy Production environment

###################################

- stage: DeployProduction

displayName: Deploy Production

variables:

- group: ParamsPrd

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: prd

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)

Conslusion

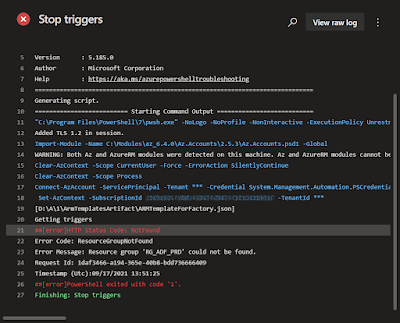

In this post you learned how to avoid the need of the publish button in Azure Data Factory. This will give you a much better CICD experience. Unfortunately this is not yet available for Synapse. In the

next post we will give the Service Principal access to the target Data Factories, stop the triggers, deploy the arm template, do a little cleanup of old pipelines/datasets and then turn on or off certain

triggers for that environment. Thx to colleague

Roelof Jonkers for helping.

Now all YAML parts together:

###################################

# General Variables

###################################

variables:

- group: ParamsGen

- group: ParamsDev

###################################

# When to create a pipeline run

###################################

trigger:

branches:

include: # Collaboration branch

- main

paths:

exclude:

- CICD/*

stages:

###################################

# Create Artifact of ADF files

###################################

- stage: CreateADFArtifact

displayName: Create ADF Artifact

jobs:

- job: CreateArtifactJob

workspace:

clean: all

pool:

vmImage: 'windows-latest' #'ubuntu-latest'

steps:

- checkout: self

displayName: '1 Retrieve Repository'

clean: true

###################################

# Installs Node.js on agent

###################################

- task: NodeTool@0

displayName: '2 Install Node.js'

inputs:

versionSpec: '10.x'

checkLatest: true

###################################

# Install npm package of ADF

###################################

- task: Npm@1

displayName: '3 Install npm package'

inputs:

command: 'install'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

verbose: true

###################################

# Validate ADF in repository

###################################

- task: Npm@1

displayName: '4 Validate ADF'

inputs:

command: 'custom'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

customCommand: 'run build validate $(Build.Repository.LocalPath)/ADF /subscriptions/$(DataFactorySubscriptionId)/resourceGroups/$(DataFactoryResourceGroupName)/providers/Microsoft.DataFactory/factories/$(DataFactoryName)'

###################################

# Generate ARM template from repos

###################################

- task: Npm@1

displayName: '5 Generate ARM template'

inputs:

command: 'custom'

workingDir: '$(Build.Repository.LocalPath)$(PackageLocation)' # Working folder that contains package.json

customCommand: 'run build export $(Build.Repository.LocalPath)/ADF /subscriptions/$(DataFactorySubscriptionId)/resourceGroups/$(DataFactoryResourceGroupName)/providers/Microsoft.DataFactory/factories/$(DataFactoryName) "$(ArmTemplateFolder)"'

###################################

# Publish ARM template as artifact

###################################

- task: PublishPipelineArtifact@1

displayName: '6 Publish ARM template as artifact'

inputs:

targetPath: '$(Build.Repository.LocalPath)$(PackageLocation)$(ArmTemplateFolder)' # The arm template export folder

artifact: 'ArmTemplatesArtifact'

publishLocation: 'pipeline'

###################################

# Show treeview of agent

###################################

- powershell: |

tree "$(Pipeline.Workspace)" /F

Write-host "--------------------ARMTemplateForFactory--------------------"

Get-Content -Path $(Build.Repository.LocalPath)$(PackageLocation)$(ArmTemplateFolder)/ARMTemplateForFactory.json

Write-host "-------------------------------------------------------------"

displayName: '7 Treeview Workspace and ArmTemplateOutput content '

###################################

# Deploy Test environment

###################################

- stage: DeployTest

displayName: Deploy Test

variables:

- group: ParamsTst

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: tst

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)

###################################

# Deploy Acceptance environment

###################################

- stage: DeployAcceptance

displayName: Deploy Acceptance

variables:

- group: ParamsAcc

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: acc

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)

###################################

# Deploy Production environment

###################################

- stage: DeployProduction

displayName: Deploy Production

variables:

- group: ParamsPrd

pool:

vmImage: 'windows-latest'

condition: Succeeded()

jobs:

- template: deployADF.yml

parameters:

env: prd

DataFactoryName: $(DataFactoryName)

DataFactoryResourceGroupName: $(DataFactoryResourceGroupName)

DataFactorySubscriptionId: $(DataFactorySubscriptionId)